The World Is Finally Listening to a Different Kind of AI Ethicist

Feb 25, 2026

PNN

New Delhi [India], February 25: Shekhar Natarajan with his interviewer at The Business Influencer, holding the UK magazine's cover story on his work.

The auditorium at Bharat Mandapam, one of India's most prestigious convention centres, fell silent in the particular way that rooms do when something unexpected is about to happen. The AI Summit on Trust, Safety, and the Future of AI Governance had been a parade of policy papers, regulatory frameworks, and cautious optimism from establishment voices. Then Shekhar Natarajan took the stage.

What followed, according to delegates who attended this past Wednesday -- February 19, 2026 -- was not a presentation. It was a reckoning. The Founder and CEO of Orchestro.AI, architect of a framework he calls "Angelic Intelligence," stood before global policymakers, technology executives, and international journalists and delivered a verdict on the entire edifice of AI governance that the world has spent the last decade constructing.

"The entire world is debating how to govern AI after the fact," he told the packed hall. "Angelic Intelligence asks a fundamentally different question: how do we build a machine that is inherently good?"

The standing ovation that followed was not polite applause. It was the sound of a room recognising that an outsider had just said the thing that insiders had been unable to articulate.

The UK Took Notice First

The photographs in this report tell a story that words almost obscure. In the first, Natarajan sits beside a UK host on a terracotta sofa, both men holding a copy of The Business Influencer magazine -- the cover story bearing his name. On the table in front of them, a second copy. On the wall behind them, the kind of classical fresco that adorns European institutions of genuine standing. It is not the image of a man who has been invited into the room. It is the image of a man who has become the story the room is telling about itself.

The second photograph is starker. Stage lights. A formal ceremony. Natarajan in an exquisitely embroidered Indian sherwani -- a deliberate, visible marker of cultural identity at a moment of international recognition -- receiving what the Signature Awards describe as its Global Impact prize. The man presenting it wears a black turban and a bow tie. Both men are leaning slightly toward each other, sharing something private in a public moment.

That award was, by any reading of what followed, a turning point. The Business Influencer, one of the United Kingdom's recognised voices on entrepreneurship and innovation, ran the cover feature that emerged from conversations around the ceremony. The piece traced Natarajan from a one-room Hyderabad childhood -- shared with seven family members, his father earning the equivalent of £1.40 a month -- to the helm of a Silicon Valley company proposing a fundamental reimagining of how artificial intelligence is built.

Britain, which has positioned itself as a serious arena for AI governance debate since hosting the historic Bletchley Park AI Safety Summit in 2023, was not simply celebrating an interesting immigrant success story. It was endorsing an idea.

The Idea That Stopped the Room

To understand why Natarajan's framework is generating such unusual international traction, it helps to understand the problem it is solving -- and why Western institutions have struggled to solve it themselves.

The dominant approach to AI ethics, from Brussels to Washington to Beijing, operates in the same register: build the system, observe the harms, then apply governance. The EU's AI Act, lauded as a landmark piece of legislation, is essentially a sophisticated version of this model -- a classification system for risk, a set of obligations, a compliance machinery. It is, by design, reactive.

Natarajan's argument is that this is the wrong war fought with the wrong weapons. His Angelic Intelligence framework proposes something structurally different: embed virtue into the computational architecture itself, before a single decision is made. Not guardrails. Not compliance checklists. Not external audits of algorithmic bias. A machine that cannot make an unethical decision because ethics is not a constraint on the system -- it is the system.

The mechanism is, architecturally, a council. The framework deploys 27 specialised AI agents -- Natarajan calls them "Digital Angels" -- each embodying a virtue drawn from wisdom traditions that span Hindu, Buddhist, Christian, Islamic, Indigenous, and philosophical lineages. Karuna, representing compassion, asks: who will be hurt by this decision? Satya, representing truth, asks: is this accurate, or merely statistically probable? The agents must deliberate. They must reach consensus. No single optimisation metric can override the council.

Consider the warehouse scenario Natarajan uses to illustrate the stakes: a luxury handbag and a critical medical parcel sit side by side in a logistics system. Traditional orchestration -- the kind that has powered Amazon, FedEx, and the entire modern supply chain edifice -- will route the higher-margin shipment first. It is optimising for the metric it was built to optimise. An Angelic Intelligence system, Natarajan argues, would route the medicine. Not because it was programmed with a rule that says "medicine before luxury goods" but because the architecture of the system -- its native language, as he puts it -- is virtue.

"Ethics cannot be a patch," he has said. "It cannot be a compliance checklist. Angelic Intelligence starts from a different place entirely -- it starts from love."

An Indian Philosophical Tradition Enters the Global AI Debate

What is striking to observers tracking the international reception of Natarajan's work is not simply the novelty of the technical proposal. It is the explicit, unapologetic rootedness of the idea in non-Western philosophical tradition.

The 27 Digital Angels are not drawn from Kantian ethics or utilitarian calculus -- the two philosophical traditions that have largely shaped Western AI ethics discourse. They are drawn from the full breadth of human civilisation's accumulated wisdom about how to act well in the world. The virtue of 'Karuna' comes from Sanskrit. The architecture of deliberation and consensus mirrors Panchayat traditions that predate the modern state by millennia.

Natarajan is deliberate about this. Every morning at 4 AM, he practises classical Indian painting -- a discipline he describes as both artistic expression and a problem-solving methodology. "The best solutions come not from speed, but from patience," he told the Bharat Mandapam summit. "We must build AI with love, not just with code."

This is, in the context of a global AI debate dominated by American tech companies and European regulators, genuinely unusual. The Sangri Buzz analysis of the 27 Digital Angels framework noted that it represents "a fundamental rethinking of how AI systems should be built" -- but what received less attention was the observation that such a rethinking could only have come from outside the Western optimization tradition. You cannot reimagine a paradigm from inside it.

Natarajan's own formulation is that he is building technology "with love, not speed." He speaks of thousand-year timeframes. He quotes from memory the moment his mother stood outside a headmaster's office for 365 consecutive days to secure his school admission. These are not rhetorical flourishes. They are the epistemological foundations of a different approach to what technology is for.

From South Central India to the World Stage

The personal biography is inseparable from the intellectual proposition, and Natarajan does not pretend otherwise.

He holds degrees from Georgia Tech, MIT, Harvard Business School, and IESE -- a credentialing record that would, in conventional terms, mark him as a product of the establishment. But the 25 years that followed, spent inside the Fortune 500 machinery of Walmart, Coca-Cola, PepsiCo, Disney, Target, and American Eagle, produced in him not confirmation of the system's values but a deepening critique of them.

At Walmart, he grew the grocery delivery business from " million to " billion -- a 166-fold increase that is, by any measure, an extraordinary achievement of optimisation. But the closer he got to the machinery of optimisation, the more clearly he saw what it could not see: the worker whose dignity was not a metric, the family whose medical parcel was deprioritised because its margin was lower, the community whose needs did not register in any efficiency formula.

The reckoning came in 2017, a year bracketed by two family tragedies: the decision to let his father pass from a vegetative state, and his mother's illness requiring his sustained presence and care. Between hospital corridors and the weight of decisions no algorithm can make, he formulated the question that would become Orchestro.AI: what if the systems we build were designed, from their inception, to ask "what's the human here?"

His son Vishnu, born in 2020, crystallised what had been intellectual conviction into something more urgent. Legacy, Natarajan has said, suddenly meant more than personal achievement. He wanted to pass down not money, but a world designed with compassion. The corporate ladder was left behind. Orchestro.AI was built.

The path to global recognition, when it came, did not follow conventional routes. According to reporting by The Daily Guardian, the World Economic Forum's invitation to present Angelic Intelligence at Davos arrived after his ideas had already reached an estimated 800 million people through social media. No academic appointment. No government advisory role. No venture backing. Just an idea that, when put before the largest possible public, resonated at a scale that institutions could no longer ignore.

Forbes Middle East, which listed him as a featured presenter on the future of artificial intelligence, described the reach of his ideas in three months as amounting to 670 million views and three million followers. These are not the metrics of an academic with a theory. They are the metrics of a movement.

The Patent Portfolio: Protecting Virtue at Scale

Critics of virtue-based AI frameworks often raise a predictable objection: that such frameworks are philosophically interesting but practically unimplementable, that virtue cannot be operationalised without becoming something other than virtue.

Natarajan's response to this objection is, in part, his patent portfolio. He holds over 70 patents protecting the Angelic Intelligence framework -- covering not just the multi-agent architecture, but the specific mechanisms for inter-agent deliberation, the escalation protocols triggered when agents disagree, and the interfaces through which human oversight is maintained. This is not philosophy. This is engineering.

The patents serve a second, perhaps more important function. "Without patent protection," he has explained, "anyone could take these concepts and implement them badly -- or implement them in name only while pursuing the same old optimisation." The protection ensures that what carries the Angelic Intelligence name must actually function as designed: all 27 agents deliberating, escalation protocols intact, human oversight preserved.

His career history at Coca-Cola is instructive here in ways that have received insufficient attention. The ColaLife initiative -- which used the dead space inside Coca-Cola delivery crates to distribute life-saving medicines like oral rehydration salts to remote Zambian villages -- demonstrated that commercial logistics infrastructure could be repurposed for humanitarian outcomes. Natarajan was inside the system that proved this was possible. Orchestro.AI is the attempt to build a system where this is not an exception but the default.

Why Now, and Why This Matters for Britain

The timing of Natarajan's rise matters. The world is entering a period in which the governance frameworks for AI -- the EU AI Act, the American executive orders, the UK's principles-based approach, the G7's Hiroshima Process -- are all being stress-tested by the actual behaviour of deployed systems.

The results of retroactive governance are increasingly visible. Algorithmic systems optimised for single metrics -- efficiency, speed, collection, probability -- have repeatedly produced outcomes their designers did not intend and could not predict. The common thread, Natarajan argues, is not malicious intent but architectural limitation: systems built with efficiency as the only virtue, with no native mechanism for compassion, no embedded voice for caution.

For the United Kingdom, which is seeking to establish itself as a serious locus of AI governance credibility post-Bletchley, the endorsement of Natarajan's framework carries particular significance. The Business Influencer's decision to make him its cover story was not accidental. It was a signal that British institutions -- at least some of them -- recognise that the most interesting ideas in AI ethics may not be coming from the places those ideas have historically come from.

The Global Impact Award from the Signature Awards ceremony reinforced this. Awards of this kind are, among other things, acts of institutional endorsement. They say: this person's work matters, and we want our name associated with it.

The Broader Reckoning

There is a question that Natarajan's growing international profile raises -- one that his hosts in London, New Delhi, and Davos are beginning to grapple with.

If the Angelic Intelligence framework is correct -- if virtue-native architecture offers a more durable foundation for AI than governance applied after the fact -- then the conversation shifts from regulation to design. The question is no longer how to constrain systems that misbehave, but how to build systems that, by their nature, behave well. That is an architectural ambition, and it demands a different kind of engineering, a different kind of investment, and a different kind of patience.

Natarajan's own framing is long. He has described this as a "thousand-year project" -- positioning Angelic Intelligence not as a product competing in this year's market, but as a civilisational contribution. The kind of claim that demands a long attention span from institutions more accustomed to quarterly cycles.

The standing ovation at Bharat Mandapam suggests the institutions are listening.

When Shekhar Natarajan left the stage at Bharat Mandapam, delegates described his address as "a paradigm-shifting intervention that reframed the entire conversation." He had a flight to catch. There are more rooms, and the rooms are getting bigger.

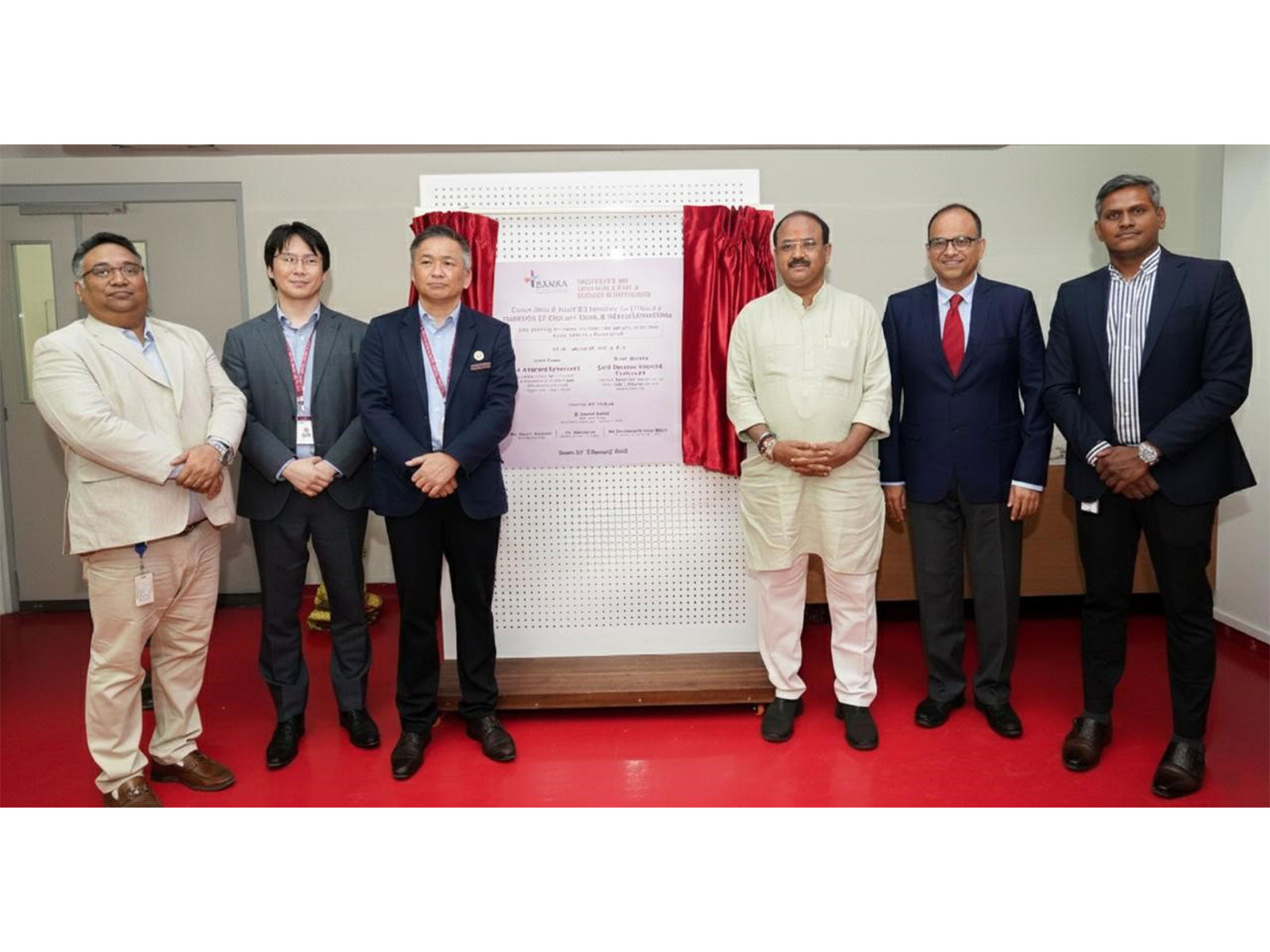

Natarajan receives the Global Impact Award at the Signature Awards ceremony.

(ADVERTORIAL DISCLAIMER: The above press release has been provided by PNN. ANI will not be responsible in any way for the content of the same.)